What is HEXNULL and Why Autonomous Security Needs Architecture

Introduction

Autonomous security is not a feature.

It is not a chatbot wrapper around nmap.

It is not an LLM summarizing scan results.

It is not a prompt chained to a vulnerability scanner.

Autonomous security is an architectural problem.

HEXNULL exists to address that problem.

I build AI agents for penetration testing workflows, recon automation tools, CVE contextualization, log triage systems, and offensive security automation — but always through structured architecture.

This article explains:

- What HEXNULL is

- Why AI agents for penetration testing require system design, not scripts

- Why recon automation tools fail without orchestration layers

- Why offensive security automation must be architected

- And why “autonomous” without structure is just automation with marketing

This is not about replacing security engineers.

It is about augmenting them with engineered intelligence.

1. What HEXNULL Actually Is

HEXNULL is not a company.

It is not a hacker persona.

It is not an underground tool vendor.

HEXNULL is a pseudonymous independent AI Agent Architect focused on offensive security automation.

The core mission:

Design structured AI systems that operate inside penetration testing workflows.

The emphasis is deliberate:

- Structured

- Workflow-aware

- Architected

- Minimal

- Engineered

HEXNULL is not about building more scripts.

It is about building agent architectures.

2. The Problem With “AI in Cybersecurity”

Most current “AI security tools” fall into three categories:

- LLM wrappers around CLI tools

- Chat interfaces over logs

- Static ML classifiers for anomaly detection

These are tools.

They are not agents.

An agent must:

- Maintain state

- Make decisions

- Choose tools dynamically

- Interpret intermediate results

- Adapt strategy

Most AI security products do none of these.

They execute.

They do not reason.

And most importantly — they lack architecture.

3. Why Autonomous Security Needs Architecture

Most current “AI security tools” fall into three categories:

- LLM wrappers around CLI tools

- Chat interfaces over logs

- Static ML classifiers for anomaly detection

These are tools.

They are not agents.

An agent must:

- Maintain state

- Make decisions

- Choose tools dynamically

- Interpret intermediate results

- Adapt strategy

Most AI security products do none of these.

They execute.

They do not reason.

And most importantly — they lack architecture.

4. AI Agents for Penetration Testing: What They Should Actually Do

An AI agent in a pentesting workflow should:

- Understand scope

- Plan recon strategy

- Select appropriate recon automation tools

- Interpret results

- Identify promising attack surfaces

- Escalate depth where needed

- Discard noise

- Produce structured findings

Not just summarize.

Not just execute.

But orchestrate.

The difference is architectural.

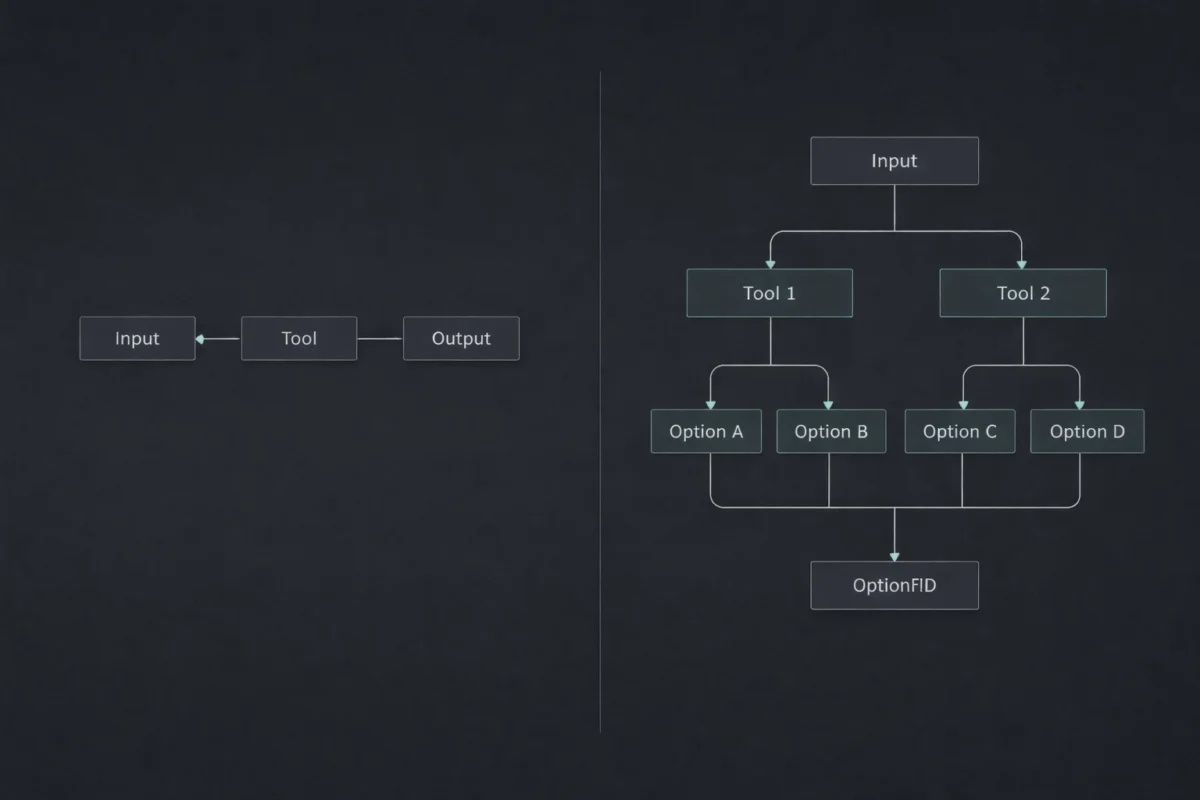

5. Recon Automation Tools Are Not Enough

Recon pipelines traditionally look like this:

- subfinder

- amass

- httpx

- naabu

- nuclei

- custom scripts

These tools are powerful.

But they are blind to context.

They do not:

- Decide when enumeration is sufficient

- Prioritize based on historical findings

- Stop when diminishing returns begin

- Adjust based on discovered tech stack

- Re-scope based on unexpected assets

An AI agent with architecture can.

Because architecture introduces:

- Decision trees

- Strategy modules

- Memory persistence

- Scoring systems

- Confidence thresholds

Recon automation tools are components.

Agents are orchestrators.

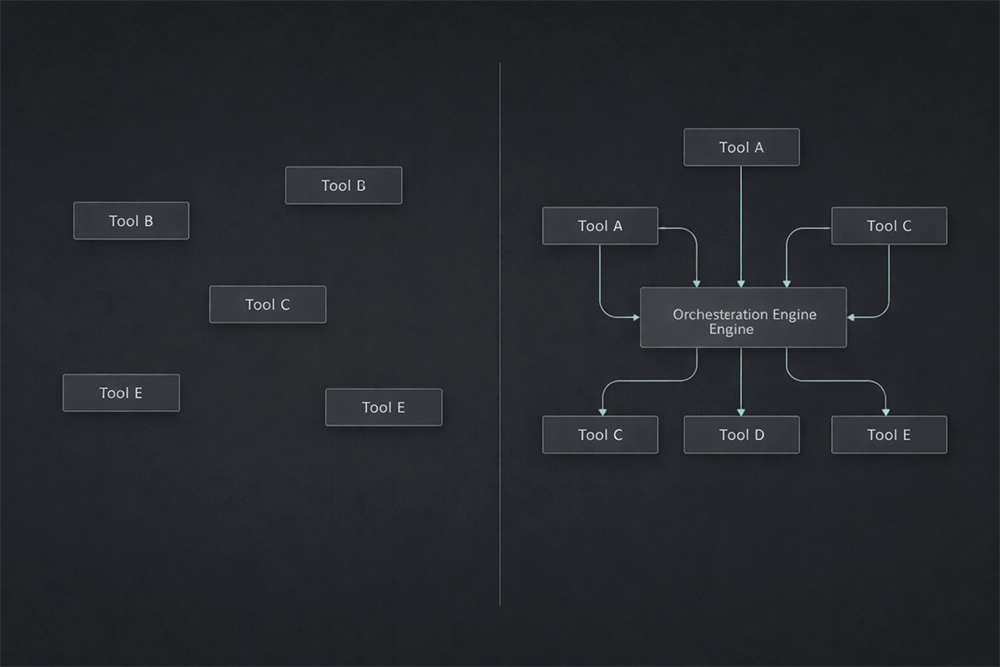

6. Offensive Security Automation Is a Systems Problem

Offensive security automation is not:

“Run more tools faster.”

It is:

“How do we structure intelligence across stages?”

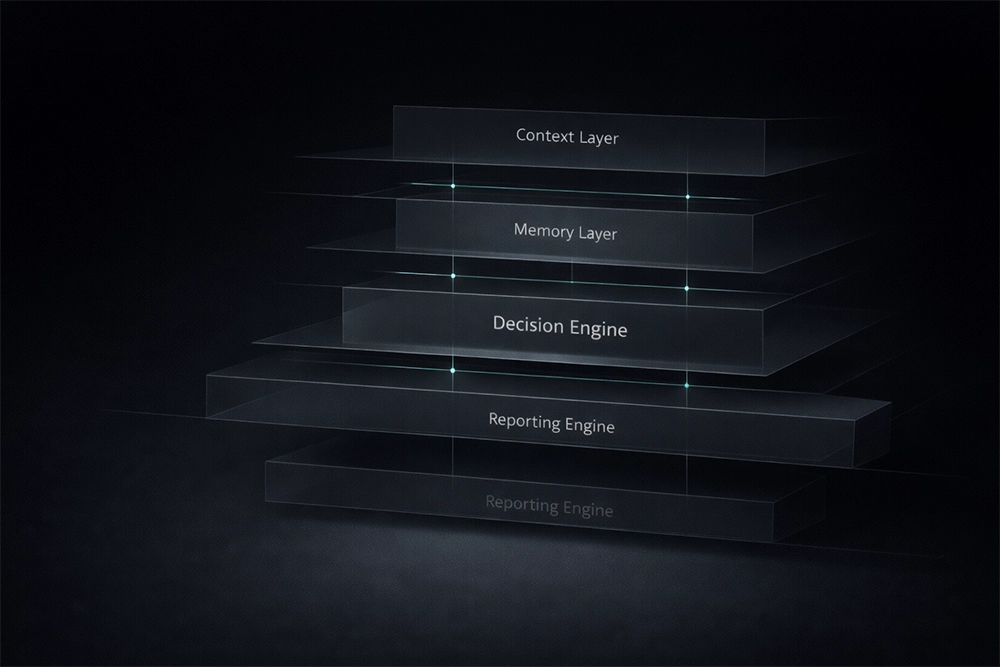

A proper architecture includes:

6.1 Context Layer

Defines:

- Engagement scope

- Asset boundaries

- Risk profile

- Historical knowledge

6.2 Memory Layer

Stores:

- Discovered hosts

- Technology fingerprints

- Exploitable patterns

- Failed attempts

Memory prevents repetition.

6.3 Tool Abstraction Layer

Each tool is wrapped in:

- Structured input format

- Normalized output schema

- Error handling

- Timeout policy

Agents should never consume raw CLI output directly.

6.4 Decision Engine

Evaluates:

- Result confidence

- Signal-to-noise ratio

- Escalation necessity

- Cost vs benefit

6.5 Reporting Engine

Produces:

- Structured vulnerability objects

- Contextual CVE mapping

- Risk narrative generation

- Reproducible steps

This is offensive security automation with architecture.

Without this structure, AI agents degrade into expensive scripts.

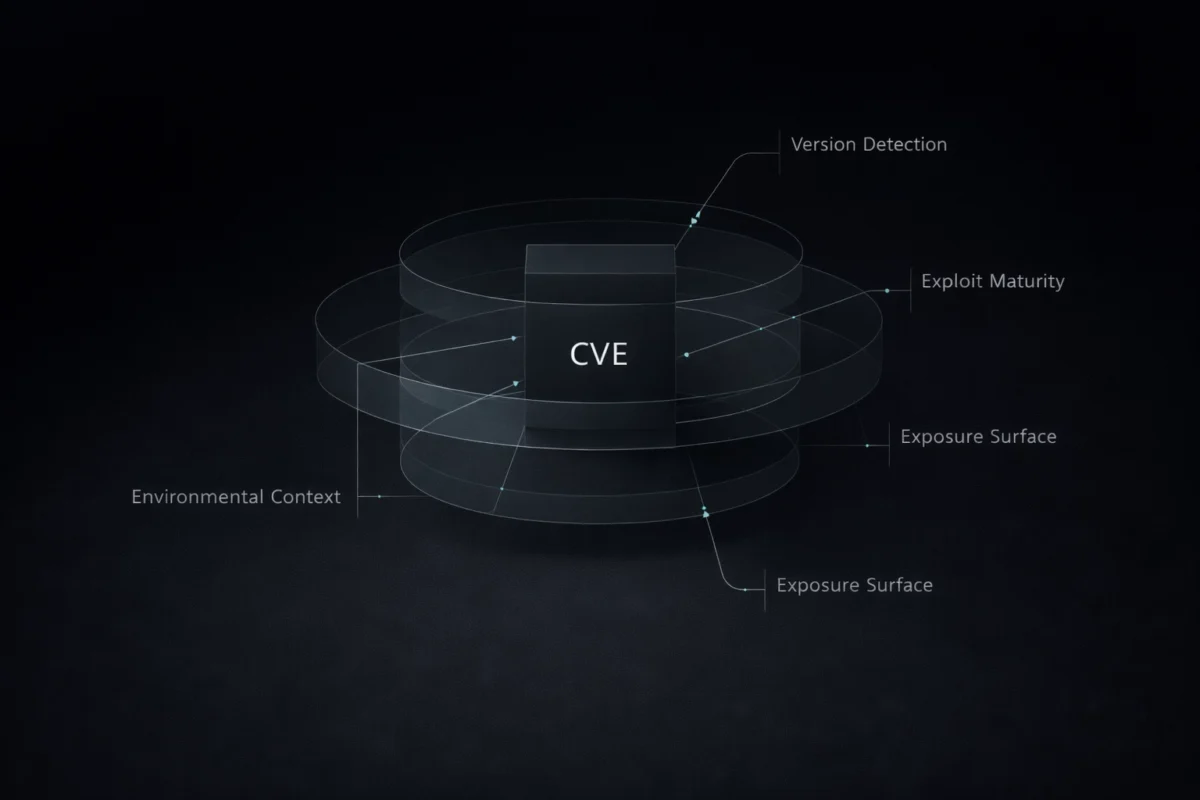

7. CVE Contextualization Requires Intelligence, Not Summarization

Summarizing CVEs is trivial.

Contextualizing them is not.

An architected AI agent should:

- Map CVE to detected version

- Assess exploit maturity

- Evaluate environmental exposure

- Cross-reference public PoCs

- Score exploit feasibility in context

Not:

“CVE-2023-XXXXX is a critical vulnerability allowing RCE.”

Context is what matters.

Architecture allows contextual reasoning.

8. Why Most AI Agents Fail in Security

Common failure patterns:

- No bounded autonomy

Agents operate without limits.

- No tool constraints

Agents hallucinate tool capabilities.

- No output schema

Results become inconsistent.

- No iteration control

Agents loop indefinitely.

- No separation between planning and execution

Architecture solves these.

HEXNULL builds agents with:

- Clear planning phase

- Execution isolation

- Structured intermediate states

- Deterministic logging

- Controlled iteration

9. Architecture Over Hype

Security does not tolerate randomness.

Pentesting is structured thinking.

If AI agents are introduced without structure:

- Findings lose reproducibility

- False positives increase

- Reporting becomes unreliable

- Engineers lose trust

Architecture ensures:

- Determinism where needed

- Bounded creativity

- Tool reliability

- Clear audit trails

Autonomy must be engineered.

10. The Role of HEXNULL

HEXNULL is not building generic AI tools.

The focus is specific:

AI agents for penetration testing workflows.

Current and upcoming systems include:

- Recon orchestration agents

- Log triage agents

- CVE contextualization engines

- Structured report generation systems

Each agent is built with:

- Tool abstraction layers

- Decision frameworks

- Memory modeling

- Output normalization

- Architecture documentation

The goal is not volume.

The goal is precision.

11. Security Engineers Are Not Being Replaced

Autonomous systems in security should:

Reduce:

- Manual triage

- Repetitive scanning

- Context switching

- Reporting overhead

Not replace:

- Critical thinking

- Exploit development

- Strategy design

- Ethical judgment

Agents augment.

They do not substitute.

12. The Future of Offensive Security Automation

We are moving from:

Tool execution

to

Intelligent orchestration.

From:

Script chaining

to

Agent architecture.

From:

Output aggregation

to

Contextual reasoning systems.

The shift is architectural.

And architecture determines durability.

Conclusion

Autonomous security is inevitable.

But autonomy without architecture is unstable.

HEXNULL exists to build structured AI agents for penetration testing and offensive security automation — engineered systems, not hype-driven wrappers.

If you are a security engineer or pentester looking beyond scripts, beyond dashboards, beyond LLM gimmicks:

Architecture is the next layer.

And it must be deliberate.

Explore Agents

Structured AI agents for penetration testing, recon automation tools, and offensive security automation are available for review.

How I Built HX-Recon-Lite Using Existing Open Source Tools

Every new security founder makes this mistake. They think they need to build everything from scratch. I almost made the

Why Most AI Security Agents Fail in Real Penetration Testing

rtificial intelligence is everywhere right now. Every week I see people building: AI pentesting bots autonomous hacking agents GPT-powered recon

What is HEXNULL and Why Autonomous Security Needs Architecture

Introduction Autonomous security is not a feature. It is not a chatbot wrapper around nmap.It is not an LLM summarizing